Current harms and catastrophic risks are not incompatible. Melanie Mitchell’s reply to Yoshua Bengio in a recent Munk Debate shows how discussions about the risks of artificial intelligence (AI) can often lead to misunderstandings. Of course, she was right. It is hard to kill 8 billion people. And if that’s the definition of an ‘existential’ risk, it indeed sets a very high bar. But does it imply that we should ignore catastrophic risks?

Participants in the debate over the risks of AI tend to fall into two categories. Some, the ‘AI doomers’, point out that AI systems might sooner than later wipe out humans. Others, the ‘AI hype critics’, dismiss these risks as hype and emphasize the current — real — harms that AI poses such as discrimination or misinformation. Consider a recent editorial published in Nature (my emphasis).

The idea that AI could lead to human extinction has been discussed on the fringes of the technology community for years. The excitement about the tool ChatGPT and generative AI has now propelled it into the mainstream. But, like a magician’s sleight of hand, it draws attention away from the real issue: the societal harms that AI systems and tools are causing now, or risk causing in future. Governments and regulators in particular should not be distracted by this narrative and must act decisively to curb potential harms. And although their work should be informed by the tech industry, it should not be beholden to the tech agenda.

This narrative implies that only one set of those risks can be ‘real’ and be worthy of our attention. But this is a false dilemma. Mitigating current harms doesn’t imply giving up on the catastrophic, and vice versa. This is because addressing both sets of risks may involve similar solutions. For that reason, a more productive way of thinking about those risks and harms consists in considering how the current harms may be linked to the longer-term, potentially catastrophic ones. In particular, might current risks be on a causal pathway to catastrophic ones?

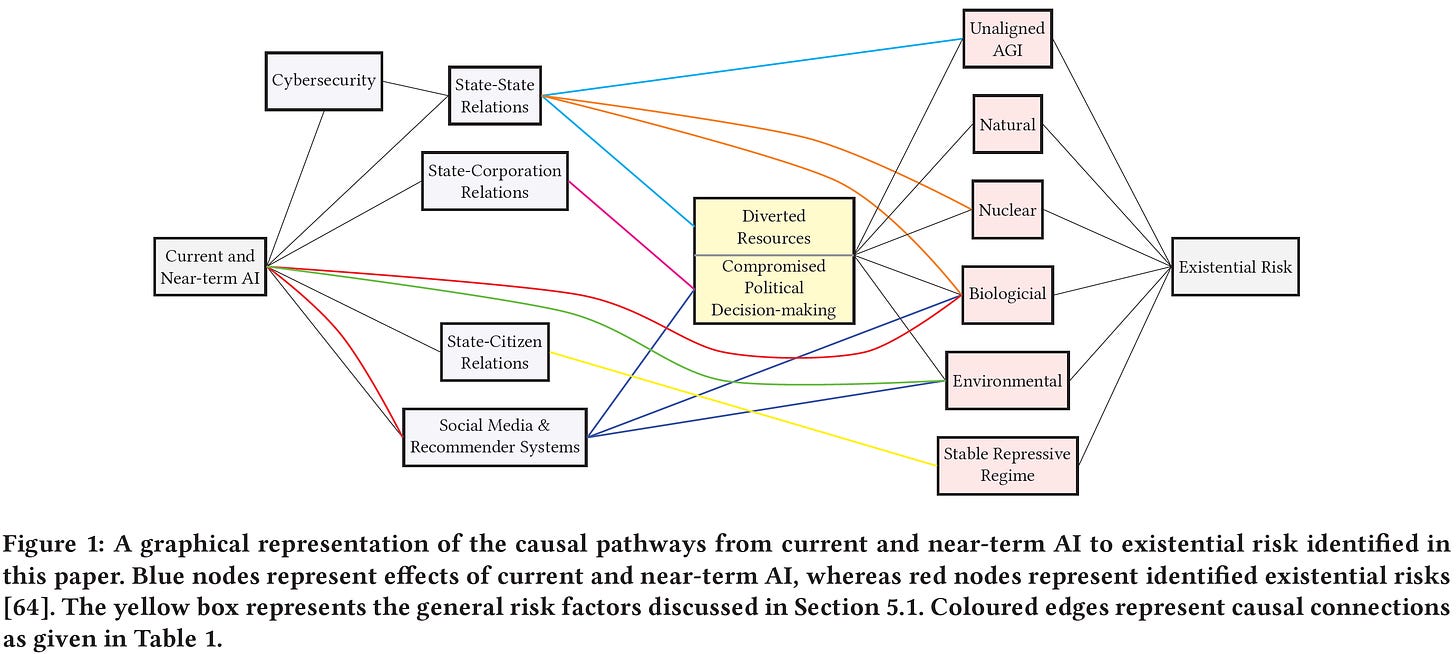

A paper by Bucknall and Dori-Hacohen precisely explores that idea. They identify risks posed by current/near-term AI capabilities and show how they might lead to more serious risks, for instance, nuclear war. Here is a figure from their paper.

In that model, current and near-term AI capabilities pose various risks, e.g. threats to information ecosystems via social media and recommender systems. These risks may be directly linked to catastrophic risks, such as the making of bioweapons. Or, they can be mediated by other processes such as disrupting political systems. For instance, AI systems might foster polarization, which might lead to escalating tensions between nuclear powers.

In a similar vein, a recent paper by Hendrycks, Mazeika, and Woodside identifies various sources of catastrophic AI risks like malicious use or organizational risks. They then examine how those risks might lead to more catastrophic risks. Although they mostly focus on the capabilities of more advanced AI systems, they also remark that "that many existential risks could arise from AIs amplifying existing concerns".

This suggests that there is much more potential agreement between the AI doomers and the AI hype critics than meets the eye. Instead of debating on the plausibility of AI systems causing catastrophic risks, it may be more productive to examine possible causal chains of risks. Crucially, the debate should be informed by more explicit modelling of how different risks interact with each other. In particular:

Are current risks on a possible causal pathway to other catastrophic ones?

Is the amplification of current risks potentially catastrophic?

If current risks are on a possible causal pathway to more catastrophic risks, mitigating the current risks may serve the dual purpose of also reducing the catastrophic ones. To illustrate, consider the case of misaligned, ‘rogue’, AI systems. In a nutshell, these are not pursuing the goals we want. In a post earlier this year, Yoshua Bengio explored how misaligned, rogue, superintelligent AIs may arise and bring about catastrophic risks. The timeline and plausibility of superintelligent AI are contentious. But that uncertainty is by and large irrelevant. Since misaligned AI systems already exist and cause harm, solving that may come in handy if more advanced AI systems come into existence.1

Of course, it doesn’t mean that some solutions will solve all problems of a given type. For instance, preventing current harms caused by the malicious use of deepfakes may only partially overlap with solving for other malicious uses. Or, we might also recognize the limitations of some techniques; e.g. reinforcement learning from human feedback is not a silver bullet for alignment. But if a technique cannot align less advanced systems, it is good evidence it won’t work for the most advanced ones either.

Above, I wrote that choosing between the current and catastrophic risks was a false dilemma. Insofar as they may share the same causal roots, those should be the main target of debate. Accordingly, AI doomers need not — should not — ignore the current harms and risks of AI systems. As for AI hype critics, they need not deny the possibility of catastrophic risks. There is a lot of common ground to be found in our causal models of the present and future risks humans face.

Which is also something Bengio acknowledges in a later post: "Additionally, there is a great overlap in the technical and political infrastructure required to mitigate both fairness harms from current AI and catastrophic arms [sic] feared from more powerful AI, i.e., having regulation, oversight, audits, tests to evaluate potential harm, etc.

Great analysis! Indeed it would be so much more productive if we’d quibble less about AI will go rogue, and more about real policy proposals that mitigate the immediate risks of the current status quo AND future harm (which naturally overlap a good bit).

A piece in Foreign Affairs written by Inflection CEO Mustafa Suleyman struck a nice balance at that in my opinion: https://www.foreignaffairs.com/world/artificial-intelligence-power-paradox