Superintelligence alignment has a meta-problem

Superintelligence alignment doesn't have a purely technical dimension.

OpenAI announced earlier this summer the creation of the Superalignment team. Its goal? “Solve” superintelligence alignment. According to OpenAI, this is the problem of superintelligence alignment:

How do we ensure AI systems much smarter than humans follow human intent?

We cannot ensure the alignment of current AI systems, let alone superintelligent ones. I recently listened to two podcasts (AXRP and 80,000 Hours) with Jan Leike, now co-lead of the Superalignment team at OpenAI. The discussions were captivating and I learned a lot. It’s also good to see OpenAI take the alignment problem seriously as well as commit resources to alignment research.

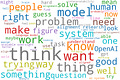

Unfortunately, the conversations only scratched the surface of some key foundational issues of alignment. Partly to get some insight into the conversations and partly for fun, I created a word cloud of the top 75 words in the transcripts.1

One thing we can infer from the word cloud is that they think a lot. Which is good. 😀 Another is that the discussions revolved around the three strategies OpenAI is focusing on to tackle superalignment, namely:

Scalable oversight: Developing techniques aimed at reducing the effort it takes to evaluate increasingly powerful AI systems.

Generalization: Studying how AI systems can make inferences about novel, unseen data.

Interpretability: Understanding an AI system’s behaviour by making its functioning intelligible.

‘Scalable oversight’ and the associated notion of an ‘alignment researcher’ were common. ‘Automated alignment’ was also prominent and applies to both scalable oversight and ‘interpretability’. And although less discussed, how AI systems ‘generalize’ was also central. It stands to reason that the interviewers and Jan Leike spent a great deal of time discussing OpenAI’s approach to alignment in those terms. After all, those are the strategies the company pursues to address superintelligence alignment.

We can also see that the word cloud doesn’t contain words like ‘intent’ (the alleged target of alignment), ‘values’, ‘rights’, ‘safe’ or cognates of ‘fairness’ or ‘democracy’. Speaking of fairness, they did touch on some (not all) of those issues, especially in the 80,000 Hours podcast. But not enough so that it would show up in the word cloud. They were on the back burner.

Why? One reason for this is that alignment is viewed as having a purely technical dimension. Consider the following quote from Jan Leike in the AXRP podcast.

Also, we need to solve technical alignment so we can actually align AI systems. The risks that superalignment is looking at is just the last part. We want to figure out the technical problem of how to align one AI system to one set of human values. And there’s a separate question of what should those values be? And how do we design a process to import them from society?

According to Jan Leike, the alignment problem has many components. There is technical alignment, but there are other parts, like ethics or governance. The assumption is that solving technical alignment is, if not necessary, at least a crucial component of superintelligence alignment.

The meta-problem of superintelligence alignment

Many problems have distinct technical dimensions. Technical problems are usually well-defined and can be addressed using an established methodology. Furthermore, they typically don’t involve a substantial conflict of values. Optimizing how robots perform tasks on an assembly line to increase production efficiency and reduce waste may be one example of a technical problem. In its current stage, superintelligence alignment doesn’t have a purely technical dimension. The problem is not well-defined, we only have a rough idea of what a suitable solution would look like, and it involves conflicting values. In a nutshell, superintelligence alignment has a meta-problem.

Meta-problem of superintelligence alignment: Under what conditions would the problem of superintelligence alignment be solved?

The meta-problem raises two key issues. First, it’s not clear at all that the superintelligence alignment problem as formulated above is the one we want to solve. Specifically, human ‘intent’ is arguably not the goal of alignment. In an insightful paper on the goals of alignment, Iason Gabriel examines six candidates, namely instructions, intentions, revealed preferences, informed preferences, well-being, and values. He identifies three problems with intentions as the goal of alignment.

Intentions are only one part of the equation. This is because to follow intentions AI systems may need to understand other goals like preferences or values.

Intentions may be inadequate in scenarios where humans aren’t in a position to provide intentions, for instance when interacting with powerful AI systems.

Since intentions may be irrational, misinformed, or immoral, we may not want AI systems to always follow them.

Interestingly, although the goal is formally to make AI systems follow human intent, Jan Leike also sometimes formulates it in terms of values, as in the quote above. And an August 2022 post detailing OpenAI’s approach to alignment research starts like this:

Our alignment research aims to make artificial general intelligence (AGI) aligned with human values and follow human intent.

However, values and intent are different goals. Crucially, they may come apart. An AI system may follow its designer’s intent of manipulating humans, yet this behaviour would not be aligned with human values. In such cases, which goal should the AI system pursue, intent or values?

Suppose, for simplicity, that the goal of alignment is human values. A second key issue arises: we need values to evaluate whether an AI system is aligned with values! Viewing alignment as having a purely technical dimension suggests we can solve the technical problem for any arbitrary set of values and then determine which values AI systems should follow. However, values permeate the ‘technical’ problem. Let me give three examples.

What does it mean to be aligned with values? Values are abstract principles and standards of evaluation. Does being aligned with values mean being committed to those values or merely behaving in accordance with values, regardless of the commitment? Do we want AI systems to simply display appropriate behaviour, or behave for the right reasons? Having the right reasons often matters in evaluating the moral worth of actions. Deciding whether we want systems to behave for the right reasons isn’t a technical problem.

What constitutes good evidence of alignment? We currently don’t have good, accepted, standards of what evidence supports the hypothesis that a system is (mis)aligned with values. For instance, OpenAI wants to build deceptive models. However, deception is an ambiguous and shifting notion. Or, OpenAI’s approach is empirical. But why prefer empirical rather than theoretical evidence? Choosing what constitutes good evidence for a hypothesis isn’t a technical problem.

When do solutions ensure alignment? Science is fallible. As a result, the possibility that we might be wrong about whether a system is aligned is always lurking. And there are risks in accepting a false negative or a false positive. For example, what if we wrongly believed a superintelligent AI system was aligned and then deployed it? Whether we should accept a hypothesis given the risks it poses isn’t a technical problem.

All those questions have a normative component in common. In other words, technical alignment solutions are entangled with choices about what epistemic and ethical values matter. One day, technical alignment might be a well-defined problem with a clear, accepted, methodology. Until then, pace OpenAI’s ambitious goals, we also need to grapple with the meta-problem of superintelligence alignment.

For those interested, you can find some quick methodological notes, the transcript files, and the Python code in this GitHub repository .